Getting started with ML: Colab or self-hosted Jupyter?

The basic trade off is well-known. If you use an online VM like Google's Colab or Amazon's EC2 instances, you don't need hardware, but the cost of running the VMs will get expensive later. If you self-host, you need to buy some dedicated hardware, most likely a graphics card.

TLDR: use Colab

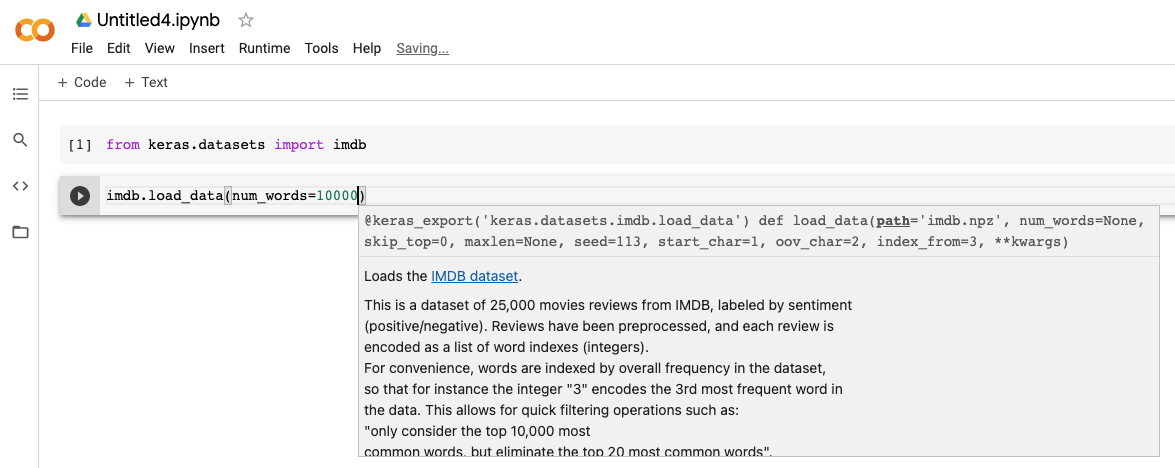

But as a beginner, this trade off doesn't come into play yet, since most tutorials will train models within minutes. As a beginner, I need an easy to set up environment. In this case, Colab wins hands down. Go to https://colab.research.google.com, and that's it, you can type in your first instructions.

Self-hosting

If you want to self-host, you have lots to consider:

- First you need to install Python. You'll have to make sure you use Python 3, and not Python 2.

- Then you need to install pip, and understand how to install packages with it.

- On Jupyter's website, they show how to install the package using a few environment managers like conda or voilà.

- Indeed you don't want to pollute your local environment. Also because ML-related packages are quite brittle when it comes to dependencies, you want an environment manager for your packages. It turns out there are a few out there and just figuring out which to use will take a you a while.

Environment managers

From a quick search you'll find out that there are three main environment managers: virtualenv, pipenv, conda. I've used virtualenv before and it's alright. Then pipenv is supposedly the newer alternative, which provides a slightly cleaner command line interface. Finally there's conda, which Jupyter mentions.

If you choose conda, you have the choice between mini conda and Anaconda. Anaconda comes preinstalled with a bunch of packages related to data science. See conda install page. To be honest, I'd rather have a clean slate to make sure I install only the packages I need, instead of downloading the internet, and not getting the latest packages.

When I transition into self-hosted Jupyter, I'll use pipenv.